A new study by the Columbia University Climate School has found that all of the 28 most populous cities in the United States are sinking to some extent. This phenomenon of subsidence is not just taking place in cities on the coast, where relative sea level is an issue, but also in cities in the interior.

The primary cause of subsidence is large-scale groundwater extraction for human use. When water is withdrawn from aquifers made up of fine-grained sediments, the pore spaces formerly occupied by water can eventually collapse, leading to compaction below and sinkage at the surface.

The fastest sinking city in the US is Houston, with more than 40% of its area subsiding more than 5 millimeters a year and 12% sinking at twice that rate. Some local spots are going down as much as 5 centimeters a year. These seem like very small numbers but the fact that the subsidence is often not uniform across an urban area means that there are stresses to building foundations and other infrastructure. Parts of Las Vegas, Washington D.C., and San Francisco have particularly fast sinking zones.

There are other causes of subsidence. In Texas, pumping of oil and gas adds to the phenomenon. A 2023 study found that New York City’s more than one million buildings are pressing down on the Earth so hard that they may be contributing to the city’s ongoing subsidence. About 1% of the total area of the country’s 28 largest cities faces some danger from uneven subsidence.

Overall, some 34 million Americans live in cities affected by subsidence. Global cities facing especially rapid subsidence include Jakarta, Venice, and here in the U.S., New Orleans.

**********

Web Links

All of the Biggest U.S. Cities Are Sinking

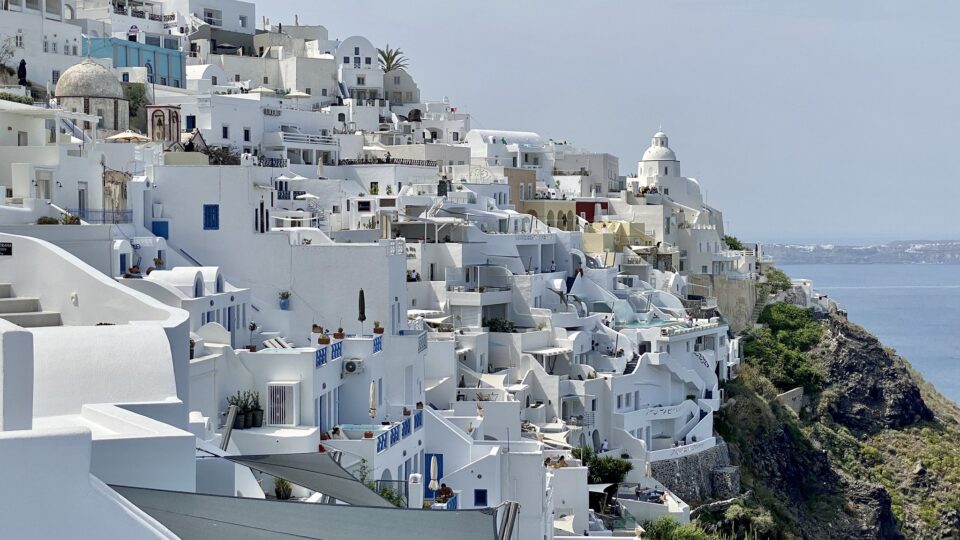

Photo, posted December 27, 2012, courtesy of Katie Haugland Bowen via Flickr.

Earth Wise is a production of WAMC Northeast Public Radio